technophobe

['tekne fōb]'

NOUN

A person who fears, dislikes, or avoids new technology.

Yup. I am a technophobe in the strictest sense of the word, with an emphasis on the word "new." I don't trust anything that hasn't been proven. Of course it wasn't always that way.

— James Albright

Updated:

2024-05-17

I embraced computers early on. As an engineering student in 1974, our interaction with computers was via punch cards where you counted lines of codes by the thickness of your card deck. In 1983 I wrote a program for my Boeing 707 squadron to automate the chore of computing navigation and fuel logs and I thought that was great. But, in retrospect, the squadron put more trust in me than I deserved. Amateur hour: making gas

But just because I made a mistake as an amateur computer programmer doesn't mean I was alone in this. But before we dive into this any further, we need to talk ones and zeros: Going digital means going binary first

My mistake impacted four airplanes, that's how many we had in our squadron. In 2004 another computer error impacted 800 airplanes. It was a mistake that was prevented every time the computer was shut down and restarted, the good old fashioned reboot. But sometimes that didn't happen and one time it unmasked the error: An Air Traffic Control Center's reboot

As aircraft become more and more dependent on computers, the result of a "software glitch" becomes harder and harder to predict. If an airplane is completely airworthy when flying on one side of a line of longitude, how could an imaginary line in space prevent it from flying according to specs on the other? Consider: The F-22 and the International Date Line incident.

It doesn't take a revolutionary fighter jet to push a computer programmer to the limit, it happens with more mundane aircraft, such as the Boeing 787 Dreamliner. The Boeing 787 and dreams of simpler systems should reinforce the need for the proverbial aircraft Control-Alt-Delete.

You don't have to be flying a billion dollar fighter jet to have your day ruined by a few Lines of code. In fact, you don't have to be flying something built in the last decade or two. Here are a few examples of where being a paranoid pilot comes in handy.

2 — Going digital means going binary first

3 — An Air Traffic Control Center's reboot

4 — The F-22 and the international date line

1

Amateur hour: making gas

From my logbook

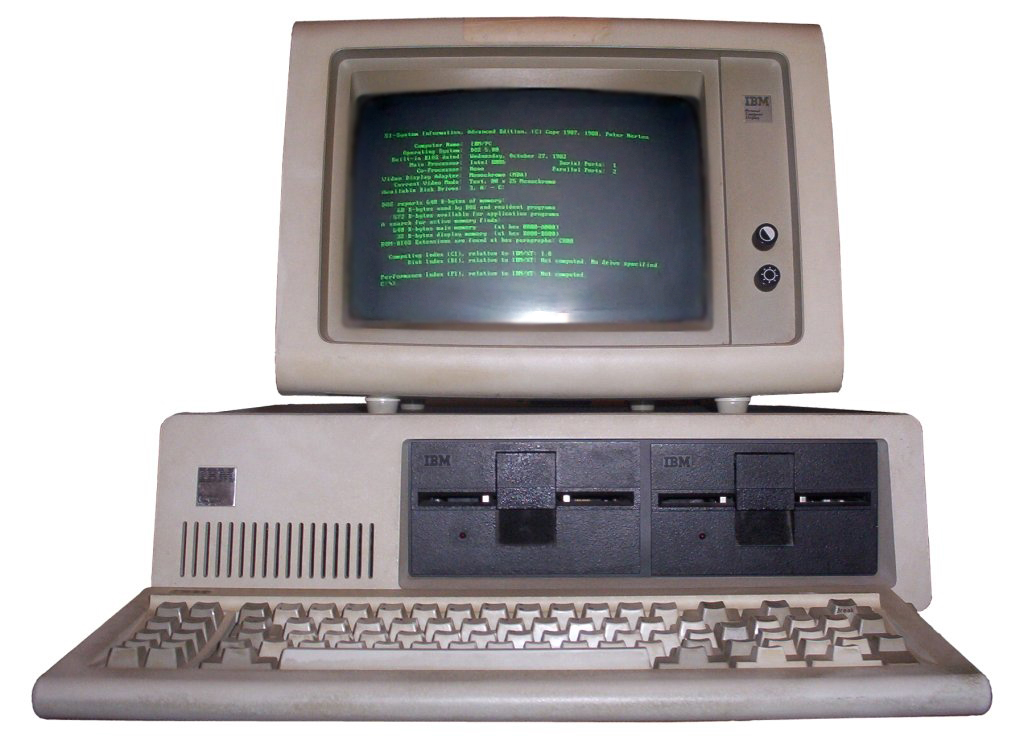

In 1982, I was a copilot flying Air Force Boeing 707s with only one thing in the cockpit that could be remotely called a computer, and that was the flight director. A mission always started the day before with the navigator pulling out a paper chart, a plotter, and dividers. After a few hours he would have a navigation log. Then I would take that log and a book of charts and add fuel computations. I would hand all of that to the aircraft commander who would pick up the phone and order a fuel load for the next day's mission. This ritual took about four hours. All that changed in 1983 when the squadron got its first desktop computer, an IBM PC 5150.

When I say all that changed in 1983, I really mean nothing changed in 1983. The squadron gave the computer to the boss's secretary where it was used for word processing. Meanwhile I bought a Kaypro II computer, something that ran an older operating system called Control Program for Microcomputers (CP/M). These were both 8-bit computers. (More on that later.) Using what I had learned in college programming main frame computers, I wrote a program for my Kaypro to automate the chore of navigation and fuel logs. I converted that to work on our IBM PC and our squadron started churning out navigation logs in minutes instead of hours.

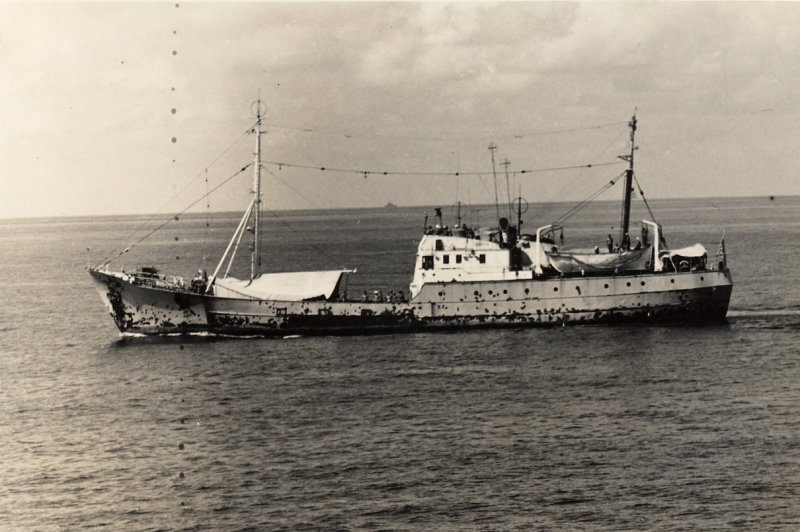

Before I got the program running I upgraded to aircraft commander and was assigned to fly a mission our squadron only got once every few years that required some unusual flight planning. We were required to fly just a couple of hundred feet above the water and head right at Russian spy ships decked out to look like fishing trawlers. These ships became known as AGIs, since the U.S. Navy classified them as "Auxiliary, General Intelligence." We were told they were armed and that it was best for us to fly as fast as possible, as low as possible. So that's what we did. When I wrote the fuel planning software, I made sure it included the airplane's entire operating envelope. Job done.

A few years later I was an examiner pilot and none of our copilots could remember the days of having to chase through charts to compute fuel burns. They just plugged the logs into the software and out came the log. In fact, that was true of many of our aircraft commanders. They just looked at the top line of the fuel log and ordered whatever it said. As an examiner, most of my checkrides were in the local area and were a bit repetitive. When I saw that an "AGI Hunter" trip came up, I eagerly added myself to the schedule. The evening before the flight, I checked the crew's paperwork and thought the fuel load was a little light. Skimming each leg of the flight plan, I would see typical fuel burns of say "-5,500 lbs" for a shorter leg and upwards of "-20,000" for a long one. When I got to the low altitude, high speed leg, the fuel burn was "+10,300 lbs." In other words, they ended the leg with more gas than when they started. Considerably more. I called the logistics branch and added 20,000 lbs to the fuel order. That night I examined my computer code, written many years before, and found the error. I was missing a multiplier for the legs flown at high speeds below 1,000 feet MSL. Nobody had ever flight planned that and my error went unnoticed.

I briefed the crew about this before the flight and they were obviously concerned. Had they busted the trip before it left the ground? I joked that I would have to bust myself since I wrote the program. But I also gave them a few techniques to make sure they could catch this kind of error in the future.

Technophobe Technique

Try to develop an idea of how much fuel your airplane burns in various phases of flight so you can do some quick math to check the computer's prediction. I keep a few numbers in mind for my Gulfstream G500 loaded with enough gas to make it from Boston to London with a cruise altitude of 41,000 feet to keep both pilots off oxygen. I know we can usually make it to altitude using just over 2,000 lbs. of fuel. We can then cruise at Mach 0.90 burning 3,300 lbs. the first hour and average 3,000 lbs. every hour thereafter. If we wanted to stretch it, pulling back to Mach 0.85 saves us about 500 lbs. an hour. Let's say we want to know how much gas is needed to fly five hours at the higher speed. I would say 2,000 lbs to climb, then 3,300 for the first hour and then 3,000 lbs. each for hours 2, 3, 4, and 5. That comes to 17,500 lbs. before alternate, reserve, and other planning numbers.

Of course this is a case of an amateur computer programmer's mistake. The pro's make mistakes too. But before we get to that, let's stop for a moment to discuss what exactly it means to say a computer is an 8-bit computer versus the models that followed.

2

Going digital means going binary first

Base 10

Most of us humans have an instinctive understanding of "Base 10," a numbering system based on the total number of fingers we have. We have concocted ten symbols (0, 1, 2, 3, 4, 5, 6, 7, 8, and 9) to represent varying number of things, including a symbol that represents no number of things. When it comes time for more than 9, we append a symbol to the left and that means that number of things times 10. That continues as you add digits to the left with even great multipliers. Okay, we all get that. This system is very efficient, in that you can represent a very large number of things with a small number of symbols. We could say we have 1,347 of something, or we could draw a hash mark that many times. Base 10 is very nice.

Binary

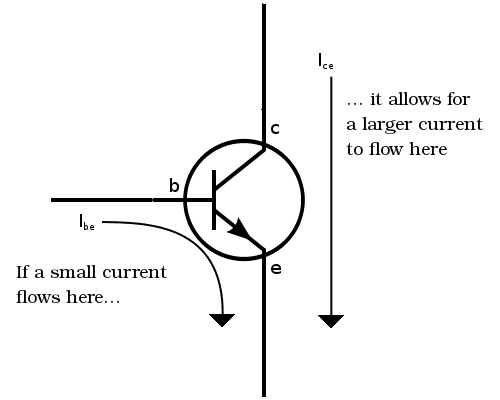

Computers don't have ten fingers, so Base 10 is out of the question. What a computer does have are lots and lots of switches. That's all a transistor is, after all, an electronic switch. To understand how a transistor works, consider a mechanical switch:

A simple transistor has three wires, using one wire to signal a "yes" or a "no" to allow current to travel between the other two wires. It is a basic switch.

Binary is "Base 2" which does have symbols for counting. They are "0" and "1" and operate much the same way Base 10 operates, but not as conveniently. That zero and one are what constitute a "bit," which stands for "binary digit." Let's count from zero to four using bits:

0

1

10

11

100

Cumbersome, eh? This is the language of a computer processor.

8-bit

Here is the number 255:

11111111

That's 8 ones. That is significant because that means eight switches (on or off) can be combined to represent 256 numbers. (Remember to add the initial zero.) Another way to write this is with the number 2 raised to the power of 8, or 28. This is what is called a "byte" and with it you can represent a lot of characters, including those numbers from 0 to 9. We have a standard called ASCII (American Standard Code for Information Interchange) that converts those 255 possible values into letters, numbers, and other symbols. Let's say you wanted to represent the uppercase letter "A" for example.

The binary answer is a series of 8 bits: 0 1 0 0 0 0 0 1. You can convert this to the ASCII decimal by converting the 1s, reading from right to left. The first 1 is in the "0" position, so that comes to 20 = 1. The seventh 1 is in the 6th position, so that comes to 26 = 64. So A is represented by 1 + 64 = 65.

32-bit

Let's try something a little longer, the number 4,294,967,295:

11111111111111111111111111111111

That's 32 ones, or 32-bit. Why is that important? Read on . . .

3

An Air Traffic Control Center's reboot

On September 14, 2004, the U.S. air traffic control system flirted with disaster because somebody forgot what I consider a cardinal rule with computers: every now and then they need a rest. I always felt there was a good reason to reboot now and then, but it took this incident to really solidify this feeling into cold, hard, reason.

- As many as 800 commercial airline flights bound for Southern California were diverted and all takeoffs from the Southland’s major airports were halted after radio and radar equipment failed for 3 1/2 hours at a major air traffic control center in the Mojave Desert on Tuesday.

- The diverted flights landed at airports in Northern California and other states, officials said, creating a massive air traffic snarl that was expected to last into today. Planes scheduled to take off for Southern California were held on the ground at airports nationwide.

- A computer glitch at 4:40 p.m. apparently caused the radio and radar failures at the Los Angeles Air Route Traffic Control Center in Palmdale, which handles cruise-altitude air traffic across Southern California and most of Arizona and Nevada, an area of about 178,000 square miles.

- Without warning, radios went dead and radar screens went blank. An official with the air traffic controllers union, Hamid Ghaffari, said a seldom-used backup system came up “for a couple of minutes, and then it failed too.”

- Exactly what went wrong was not immediately determined.

Source: LA Times

- The radios were down for about three hours, during which time the controllers used their personal cell phones to contact other traffic control centers to get the aircraft to retune their communications. There were no accidents but, in the chaos, ten aircraft flew closer to each other than regulations allowed (five nautical miles horizontally or two thousand feet vertically); two pairs passed within two miles of each other. Four hundred flights on the ground were delayed and a further six hundred canceled. All because of a math error.

- Official details are scant on the precise nature of what went wrong, but we do know it was due to a timekeeping error within the computers running the control center. It seems the air traffic control system kept track of time by starting at 4,294,967,295 and counting down once a millisecond. Which meant that it would take 49 days, 17 hours, 2 minutes, and 47.295 seconds to reach 0.

- Usually, the machine would be restarted before that happened, and the countdown would begin again from 4,294,967,295. From what I can tell, some people were aware of the potential issue, so it was policy to restart the system at least every thirty days. But this was just a way of working around the problem; it did nothing to correct the underlying mathematical error, which was that nobody had checked how many milliseconds there would be in the probable runtime of the system. So, in 2004, it accidentally ran for fifty days straight, hit zero, and shut down. Eight hundred aircraft traveling through one of the world's biggest cities were put at risk because, essentially, someone didn't choose a big enough number.

Source: Parker, pp. 303 - 299 (the pages are numbered backwards)

There have been similar instances involving the number 4,294,967,295 with the Microsoft Windows 95 operating system and the generator control units in the Boeing 787. In the case of the Boeing, it was half the number.

- There is a massive clue if you look at the number 4,294,967,295 in binary. Written in the 1s and Os of computer code, it becomes 11111111111111111111111111111111; a string of thirty-two consecutive ones.

- What the Microsoft, air-traffic control, and Boeing systems all had in common is that they were 32-bit binary-number systems, which means the default is that the largest number they can write down is thirty-two 1s in binary, or 4,294,967,295 in base-10.

- Thankfully, this is a can that can be kicked far enough down the road that it does not matter. Modern computer systems are generally 64-bit, which allows for much bigger numbers by default. The maximum possible value is of course still finite, so any computer system is assuming that it will eventually be turned off and on again. But if a 64- bit system counts milliseconds, it will not hit that limit until 584.9 million years have passed. So you don't need to worry: it will need a restart only twice every billion years.

Source: Parker, pp. 303 - 299 (the pages are numbered backwards)

If you ever had a 32-bit computer that you allowed to go into "sleep mode" regularly rather than have to wait for the full boot up process the next day, you may have noticed the computer crashed on you now after a while. Perhaps it was 49 days since its last crash. Now you know why.

Technophobe Technique

In the days following 9/11/2001, a NOTAM was issued in the U.S. requiring crews to monitor emergency frequency 121.5 MHz but that mandate was allowed to expire. In some parts of the world there are backup air traffic control frequencies. Well, guess what? There is here too and you should make use of it: 121.5 MHz.

4

The F-22 and the international date line

If you've ever flown an airplane that came right from the factory you will probably have experienced what the U.S. Navy calls the shakedown cruise, an initial outing to shake out all the glitches. But in an airplane the shakedown lasts several months. If you are flying a brand-new aircraft type, it could last years.

- Let's consider the F-22 Raptor date line incident. The F-22, at the time, was the pride and joy of the U.S. Air Force. It cost $360 million per aircraft.

- In February of 2007, the first deployment was made to Japan. They flew a half dozen aircraft across the ocean, across the dateline, to get to Japan. Fortunately they had some tankers with them because when they flew across the dateline, their computers crashed.

- The planes had crashed computers; they had no navigation, they had no communications, they had no fuel management. The only thing that was still working was the fly-by-wire system. So they were able to fly, but they couldn't communicate, they couldn't do anything else.

- They were able to visually follow the tankers back to Hawaii and they got lucky to where they had enough fuel so they were able to land. If they had poor visibility or if something had gone wrong, the pilots would have had to eject and they could have lost all six aircraft.

- The cause was said to be, well, quote, it was a computer glitch in the million lines of code. Somebody made an error in a couple of lines of code and everything goes.

Source: Koopman

- If you're finding it hard to get your head around this, you're not alone. The International Date Line causes all sorts of confusion, and whoever was programming the F-22 must have struggled to work it out. The U.S. Air Force had not confirmed what went wrong (only that it was fixed within forty-eight hours), but it seems that time suddenly jumped by a day and the plane freaked out and decided that shutting everything down was the best course of action.

- Midflight attempts to restart the system proved unsuccessful so, while the planes could still fly, the pilots couldn't navigate. The planes had to limp home by following their nearby refueling aircraft.

Source: Parker, pp. 286 - 285 (the pages are numbered backwards

Technophobe Technique

Be mindful of the aircraft's limitations and history. If it hasn't been done before, realize you are a de facto test pilot and develop an exit strategy for those periods where you are quite literally flying into the unknown. A better plan would be to leave the experimental flying to the experimental test pilots.

5

The Boeing 787 and dreams of simpler systems

In 2015 the FAA issued an Airworthiness Directive that, at first reading, seemed more of a prank thank anything else. When have you ever heard of an aircraft electrical system with a calendar-based limitation? Well, here you go:

We are adopting a new airworthiness directive (AD) for all The Boeing Company Model 787 airplanes. This AD requires a repetitive maintenance task for electrical power deactivation on Model 787 airplanes. This AD was prompted by the determination that a Model 787 airplane that has been powered continuously for 248 days can lose all alternating current (AC) electrical power due to the generator control units (GCUs) simultaneously going into failsafe mode. This condition is caused by a software counter internal to the GCUs that will overflow after 248 days of continuous power. We are issuing this AD to prevent loss of all AC electrical power, which could result in loss of control of the airplane.

I read that AD from cover to cover for signs it was a joke. Why does a GCU care about the 248th day any more than the 247th? There were a number of "tech blogs" at the time that hypothesized there was a timer in the GCU that counted in 100ths of a second and that 100(231) = 2,147,483,64800 seconds which divided by the number of seconds in a day equals 248 days and therefore the computer in the GCU couldn't multiply beyond 231 for some reason.

But that makes no sense, 231 means nothing. But what if you dedicate the first bit of that 32-bit data register to a plus or minus sign? That would leave you with enough bits left over for 248 days, 13 hours, 13 minutes, and 56.47 seconds counting in 100ths of a second. The fix has been to require the airplane to be rebooted more often.

Technophobe Technique

As the aircraft I've flown have grown with complexity so has the "cold boot" process. I've noticed that if it doesn't go the same way it usually does, chances are something isn't going to work correctly later in the flight. I think you should carefully watch and record what a normal boot up looks like. It may save you time in the long run to reboot when the cold boot doesn't happen normally. After ten years with the Gulfstream G450 we got pretty good at predicting this. If you are going to have to reboot any way, it is better to get it over with early rather than have to do almost the entire preflight and the reboot. With our new aircraft, we are still learning. And still recording.

6

Lines of code

What is a line of code?

The Air Force Boeing 707s I flew did not have Flight Management Systems until the last year I flew them. This FMS was crude and it didn't do anything we couldn't do without it, so we taught our crews to simply turn it off when it did something we didn't understand.

Those days are long gone and I don't think I've seen an FMS with an ON/OFF switch ever since. In fact, the aircraft I am flying today (a Gulfstream GVII-G500) cannot fly without the FMS.

- Item: Flight Management System (FMS) Function

- Category: B

- Number Installed: 3

- Number Required for Dispatch: 1

Source: GVII MMEL, p. 34-24, Sequence No. 32

You often hear manufacturers beg forgiveness for technical glitches by saying it was impossible to predict because of the millions of lines of code in the aircraft's computer systems. What does that mean? Consider a student computer programmer's first program, the so-called "Hello World Program" written in a computer language that is so basic, it is called BASIC (Beginner's All-purpose Symbolic Instruction Code).

10 PRINT "Hello World!"

That's one line of code. Now let's make it just a little more complicated:

10 PRINT "Hello World!"

20 GOTO 10

Now you have two lines of code and there is an error. The error is called an "endless loop" because the program will just keep printing "Hello World!" over and over without end.

It was an easy error to spot. The F-22 Raptor's error was said to be somewhere amongst 2 million lines of code. The error in my flight planning software in the Boeing 707 probably had just over 5,000 lines of code and the only way I found the glitch was to have someone actually attempt to fly the specific condition.

An example of a serious line of code error

Gulfstream G550 on approach to Henderson Executive (KHND)

(Matt Birch, http://visualapproachimages.com)

The Gulfstream GV was a game changer in business aviation, offering unparalleled speed, distance, and efficiency. In 2004 the model was updated with the Gulfstream G550, the same basic airplane with a brand new avionics package. The glass cockpit with four large Display Units (DUs) was revolutionary and has gone on to be the cornerstone of some very good cockpits in the Gulfstream stable. The airplane got its type certificate on August 14, 2003. The next year a line of computer code caused all four DUs to blank on at least one crew while in flight. Before the code could be rewritten, Gulfstream issued Maintenance and Operations letter G550-MOL-2004-0020 with the following procedure if the DUs failed to recover within 90 seconds:

If DUs DO NOT recover, continue checklist with Step 7:

- Advise passengers that normal power will be off.

- Left and Right Generators (and APU Generator, if in use)......OFF

- Wait 30 seconds.

Gulfstream issued a software update (Aircraft Service Change 902) that fixed the broken code in December of 2004 but the FAA followed up the next year with an AD:

This AD was prompted by a report indicating that all four cockpit flight panel displays went blank simultaneously. There were also two reports of similar incidents occurring on the ground. The FAA is issuing this AD to prevent a software error from blanking the cockpit display units, which will result in a reduction of the flightcrew's situational awareness, and possible loss of control of the airplane. We are also issuing this AD to address noise interference in the avionics standard communication bus (ASCB), which can interfere with the display recovery after a blanking event and consequently extend the time that the displays remain blank.

The avionics suite in the G550 is a work of art and I can imagine there must be millions of line of code behind it. Is it surprising that a line of code made the wrong decision reacting to noise interference? I don't know but I can certainly sympathize with the software engineers. What can we, as pilots, do about this? Let's look at one more example.

Example of a nuisance line of code error

We took delivery of our Gulfstream GVII-G500 within a year of the airplane's certification and we had on board a Gulfstream test pilot who was more than just a run of the mill Entry into Service (EIS) demo or delivery pilot, he really knew his stuff. The first thing we did was do three takeoffs and landings at KSAV for one of our pilots, each with an ILS approach and a quick reloading of the flight plan in the FMS, all handled by our EIS pilot. I already had two takeoffs and landings from our acceptance flights, so I just needed one more. I got into the seat with a reload of the flight plan to KBED. The FMS loaded with a speed of 160 knots and altitude of 180 feet. Try as we might, we couldn't change that. Our EIS pilot called all the experts and all we heard was "we've never heard that one before." Finally someone suggested pulling and resetting the Modular Avionics Units circuit breakers. That worked, but it made the cockpit go nuts. I told the EIS pilot, "let's never do that again."

The pilots at Gulfstream and FlightSafety were stumped. But how often did they fly a local pattern and then set up for a Point A to Point B trip? Perhaps never. I reached out to the GVII users group and a few operators already found a work around: hit the TO/GA button to fix things. That worked. After some experimentation, we discovered the problem only seems to happen after flying an ILS, shutting the engines down but leaving the avionics powered, and then entering or downloading another flight plan. We made several videos of the problem and now the avionics manufacturer is hunting for a line of code to fix.

Technophobe Techniques

Pooling knowledge

Manufacturers and training vendors tend to live in sterile bubbles where conditions tend to be predictable. There is no way they can anticipate and simulate every possible condition "out there" in the real world. It is to everyone's best interest if everyone flying a particular aircraft type connects to the rest of the population and shares experiences. In the few months I have been flying the airplane I've already discovered a few problems that stumped Gulfstream and FlightSafety but found answers in the GVII users group. "They" built the airplane or train us to fly it. But "we" have more relevant, real world experience. We should share that knowledge.

More technology

Can you imagine flying across the North Atlantic at night and end up with nothing more than a standby attitude indicator? For around $400 to $700 you can buy a portable WAAS GPS equipped with ADS-B in and a backup attitude system that will link with an iPad. This could be a life saver the next time ATC shuts down, or you discover a broken line of software code. That is cheap insurance for a multi-million dollar aircraft.

Appareo Aviation Stratus ADS-B Receivers

James,

I know this is going to sound stupid, but what happens to the airplane when you go from west longitudes to east? Are there any special pilot procedures needed?

Signed: an international pilot novice

Novice,

Like many things in aviation, things like this were an invitation to mistakes in the past, but technology tends to make it easier in the present. Back in the days when I flew with navigators and those navigators had to type in each latitude / longitude position with a numeric keypad to a very basic inertial navigation system, getting in the habit of typing the key that represented "W" could get you lost in a hurry. (It happened to me once flying from Alaska to Japan and the aircraft ended up turning the wrong way pointed to the USSR in the days that could get you shot out of the sky.)

These days, it is pretty automatic. You download the entire flight plan at once and watch the magic happen:

Of course, as the F-22 crews mentioned earlier found out, this depends on the aircraft being designed correctly!

James

References

(Source material)

Airworthiness directive, 2005-04-06 Gulfstream Aerospace Corporation: Amendment 39-13978. Docket No. FAA-2005-20280: Director Identifier 2004-NM-254_AD, Effective February 23, 2005.

Airworthiness directive, 2015-NM-058AD, Boeing 787

Gulfstream GVII-G500/G600 Master Minimum Equipment List (MMEL), Revision 1, 06/21/2019

Gulfstream Maintenance and Operations Letter, December 22, 2004, G550-MOL-04-0020, AFM Revision 8 Addresses Cockpit Flight Panel Display Units Blanking

Koopman, Philip, "System level Testing," Carnegie Mellon University lecture 18-642, 9/27/2017

Parker, Matt, "Humble Pi: When Math Goes Wrong in the Real World," Riverhead Books, 2019, U.K.

"System Failure Snarls Air Traffic in the Southland," Los Angeles Times, Sept 15, 2004

Please note: Gulfstream Aerospace Corporation has no affiliation or connection whatsoever with this website, and Gulfstream does not review, endorse, or approve any of the content included on the site. As a result, Gulfstream is not responsible or liable for your use of any materials or information obtained from this site.