The very nature of pilot licensing instills upon us the need to constantly become better at what we do. A brand-new private pilot wants to add multi-engine-land and instrument ratings, followed by a commercial license and, perhaps, an Airline Transport Pilot (ATP) rating. We aren’t alone in this type of career stratification, but few professions have as many levels on the way from novice to professional. Before I got my ATP back in 1986, I could think of nothing loftier. Since then, I’ve noticed many ATPs jump off the quest for more and more knowledge and start to coast. They might still aspire to be better pilots, but the need to become better is no longer a priority. Lost on these goal-less pilots is that in the quickly changing world of aviation, if you fail to move forward, you will fall behind.

— James Albright

Updated:

2021-06-20

I don’t mean to say these pilots are not keeping current and proficient in the mechanics of flying airplanes and the procedures drilled into them during training. They are more than likely becoming better pilots when it comes to method, by sheer repetition and regular training. I do mean to say that pilots who believe they have “done it all” and have no more goals to achieve have philosophically stopped improving. It is a question of philosophy over methodology.

I believe that if you are already a highly accomplished aviator, you need to continuously work on and improve your pilot philosophy. A pilot whom I look up to very much has three rules of aviation that spell out a philosophy I think I’ve grown into over the years but have never articulated. You may already have such a philosophy that serves you well. Whether you do or not, please consider these in your quest to become a better pilot.

1

Don't get busy

Rule 1

We all grew up with stories of pilots with ice water in their veins and neurons fast enough to make the hard choices without breaking a sweat. Speed is life, we hear. Make a decision now or die! You cannot survive more than a few flights as a professional aviator before realizing it is all a myth. Speed is not life, in fact speed kills. Rule Number One: don’t get busy. This applies to normal as well as abnormal operations.

Take, for example, the pesky problem of remembering to extend your flaps to takeoff position prior to takeoff. Aircraft manufacturers have known it is a problem for us pilots and have tried to make the system idiot proof, failing to realize the adage that this is impossible to do because we idiots are ingenious.

The venerable Boeing 707 had a safety system that sounded a warning horn if the engines were advanced to a certain throttle angle without the flaps set. The crew of a Pan American World Airways Boeing 707 taking off out of Anchorage, Alaska on a cold day found out this safety system had a flaw. They perished in 1968 because they forgot their flaps and the temperature was cold enough to allow takeoff thrust before reaching the trigger throttle angle. The crew had become distracted by the need to figure fuel planning when their filed oceanic altitude had become unavailable. They retracted the flaps they had previously set, became rushed at the last minute, and forgot to reset them.

Okay, you say, your airplane has your back. Mine too. My Gulfstream GVII has an electronic checklist that watches the flaps for me and checks that item once I get them. Before I takeoff, it gives me an itemized list of what needs to be done before I can safely takeoff. I am completely covered! Or am I?

Even with the latest technology, there remains the classic “garbage in, garbage out” problem where a rushed pilot skips a few steps and ends up fooling the fool proof computer into assuring everyone the situation is perfectly normal. That is precisely what happened to an MK Airlines Boeing 747 cargo aircraft in Halifax International Airport, Nova Scotia, Canada (CHYZ), on October 14, 2004. The crew neglected to update their takeoff weight from the aircraft’s previous takeoff and their rotation speed was based on a much lower weight; it was more than 30 knots too low. The crew was allowed to use a laptop computer to figure the performance data and to have another person verify the data using the same computer. Both pilots made the same mistake and neither noticed the error. The aircraft failed to lift off at the computed speed, became airborne beyond the paved surface, and hit an earthen berm. The aircraft became airborne again and then impacted the terrain and burst into flames, killing all seven people on board.

In many of these takeoff accidents, the crew was for some reason rushed and skipped an important step when configuring the aircraft or computing performance data. Getting busy with other chores can create the perfect distraction. The situation is perfectly normal, after all. But what about for a no kidding emergency? Then, you must admit, speed is life! No, it almost never is.

The classic case of pilots rushing to fix something that could have waited is when an engine fails during takeoff. On February 4, 2015, a TransAsia Airways ATR-72 took off from Taipei Songshan Airport, Taiwan (RCSS) when the right engine failed. Climbing through 1,200 feet, the right engine autofeathered, the master warning system indicated “ENG 2 FLAME OUT AT TAKE OFF” and listed the appropriate procedure. The captain pulled back the wrong engine. Though the first officer initially responded, “wait a second cross check,” once directed to shut down the wrong engine, he did just that. The captain didn’t realize his error until the aircraft was descending with the stick shakers activated. There was no indication of fire and no reason to immediately pull back any throttles at all.

We are often counseled in class to slow down and resist the urge to act immediately. “Go get a cup of coffee first” or “wind the clock,” we are told. My favorite: “What’s the first thing you should do in an emergency and when should you do that?” Answer: “The first thing I should do is nothing and I should do that immediately.”

But then we spend our time in the simulator practicing items where slowing down is the wrong answer. Getting a cup of anything is no doubt the wrong answer when you have an engine blow up on you at 100 knots or a rapid depressurization at Flight Level 450. Things that require immediate action should not only be reacted to immediately, but they should be practiced often. The Flight Standardization Board Report for my aircraft lists them for the Gulfstream GVII as critical steps that must be accomplished without reference to any documentation: engine fire/auxiliary power unit (APU) fire, engine failure after V1, cabin pressure low/emergency descent, engine exceedance, Enhanced Ground Proximity Warning System (EGPWS)/windshear/Traffic Alert and Collision Avoidance System (TCAS) alerts, sidestick fail, ground spoilers armed, and brake-by-wire fail on the ground. You may have to build your own list, but there is a finite list of procedures that must be accomplished immediately. For all others, you need a routine that helps you to slow down.

My technique is to announce the symptoms of the problem to the crew and the fact I am flying the airplane, the status of the automation, and constraints that could impact resolution. I do not hypothesize the cause, the required checklist, or possible solutions until the crew has had a chance to analyze the situation. In the case of the TransAsia ATR, for example: “We have an Engine Two Flame Out at Take Off indicated by the Warning System. I have control of the aircraft with the autopilot engaged and properly controlling engine-out yaw.” And that is all until the first officer has a chance to catch up.

2

Don't get smart

Rule 2

Pilots are smart people, no doubt about it. How can you routinely defy gravity without being smart? You regularly fly very smart people in back who cannot do what you do in front. So, I think it is safe to say, you are smart. But are you smart enough for all situations? One way to maintain a level of humility as a check on your “smartness” is to study the examples of other very smart pilots who ended up learning that they didn’t know everything they needed to know when making very dumb decisions.

On March 9, 2019, a Gulfstream GIV crew found themselves arriving at Atlanta-DeKalb Peachtree Airport, Georgia (KPDK) about 20 minutes before their planned runway would be open. Runway 21L/03R is 6,001 feet long and routinely handles aircraft like the Gulfstream GIV. The only option until that runway was open was Runway 34, which was only 3,967 feet long. The aircraft’s performance computer said they only needed 2,800 feet so they opted for the shorter runway.

When crossing the end of the runway, in the words of one of the pilots, “suddenly a hard impact occurred.” Damage to the fuselage was listed as “substantial.” The pilots apparently failed to realize the distance between their main gear and their visual aimpoint far exceeded the physical distance between the cockpit and the main gear. They were not as smart as they thought they were.

If you are flying an airplane the size of a Gulfstream GIV, your wheels touch hundreds of feet behind your aimpoint if you don't flare. This is a critical piece of information few pilots understand: Aim Point vs. Touchdown Point.

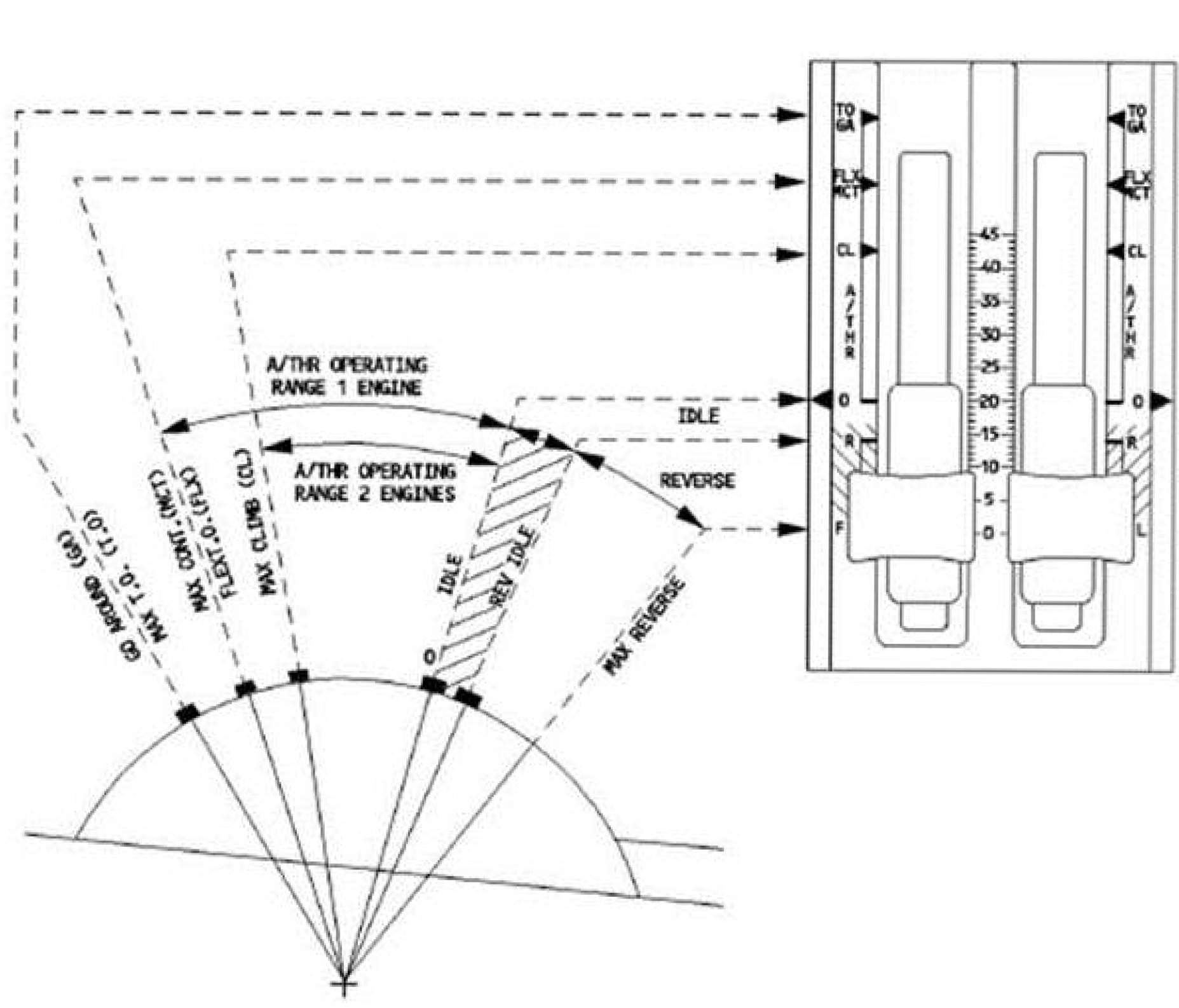

On March 13, 2014, a US Airways Airbus A320 was substantially damaged after the captain elected to abort a takeoff after becoming airborne with the aircraft’s aural warnings yelling “Retard!” repeatedly. (The “Retard!” annunciation is meant to announce autothrottle reduction during landing.) The case study is a fascinating exposé of poor Crew Resource Management and decision making. But it is also a case where the pilot’s excellent training wasn’t excellent enough. A series of pilot programming mistakes left the airplane without posted V-speeds during a reduced thrust (“Flex”) takeoff and set up a chain of warnings that cascaded to the faulty “Retard!” alerts and an “ENG THR LEVERS NOT SET” message. The correct reaction to the message was: “THR LEVERS . . . TO/GA.” When asked why he did not push the thrust levers to TO/GA after he received the "ENG THR LEVERS NOT SET" ECAM message and chime, the captain reported that it was "no harm" and left the thrust in FLEX.

According to interviews conducted for the investigation, none of the US Airways A320 line pilots or A320 check airmen had ever heard that a RETARD alert could occur on takeoff before the accident flight. What I find illuminating in this case study is the captain’s “no harm” statement. He was cavalierly smart. But not smart enough.

When I am the Second in Command (SIC) and the Pilot in Command (PIC) decides to do something that is outside Standard Operating Procedure (SOP), I like to put on my most sarcastic voice and say, “Sure! What can go wrong?” If I am the PIC I hope the SIC will do me the same courtesy. Failing that, when pondering a solution that I consider “outside to box,” I try to visualize the possible accident report. Will my peers be reading about my actions and wondering, “what was he thinking?” Or will some future aviation writer be using my actions as a case study and conclude that I was smart, but not smart enough?

3

Do things for a reason

Rule 3

When I was a fledgling Boeing 707 copilot one of my first captains would ask me why I had done something wrong, and I would come up with an answer. He would say, “Well that’s a reason. It isn’t a good reason, but it is a reason.” That has obviously scarred me for life, but in a good way. We always have reasons for what we do. We just need to make sure they are good reasons.

On January 3, 2009, a Learjet 45 crew destroyed their aircraft attempting to land at Telluride Regional Airport (KTEX), Colorado during a period of heavy snow. The runway wasn’t plowed and the airport had no plans to clear it until the next morning. The weather was below approach minimums when they arrived. After holding, they attempted a straight-in approach but did not see the runway. They did spot the runway on their second approach but were too high to land. The PIC, in the right seat, said, “I mean we could almost circle and do it. Wanna try?” To which the PF, in the left seat, said, “I don’t.”

But they did, executing a right 360-degree turn, still ending up too high. Their bank angle reached 45° at several points.

PIC: “Cut it in tight.”

PF: “I need to maintain that altitude.”

PIC: “No . . . you’re gonna have to please.”

After they rolled out:

PIC: “Keep bringing it down. Keep it slow. See the lead in light, ah the blinker.”

PF: “No, I don’t see anything yet.”

PIC: “There’s the runway.”

PF: “Are you kidding me?”

PIC: “You need to be down.”

They touched down 20 feet to the right and off the runway. The aircraft’s wings were torn from the fuselage and the tail separated just aft of the engines.

The Learjet 45 is at best a Category C circling aircraft while the LOC/DME Runway 9 is for Category A and B aircraft only. It is common practice for higher category aircraft landing in mountainous area airports to use instrument approaches as approach aids leading to visual approaches. But whenever doing this, prudent crews will fully brief how they will do that and what they will do if the plan doesn’t work out. This crew briefed a divert airport prior to starting their second approach but never again verbalized the possibility. You can argue that the PIC’s overbearing nature preventing the PF from making any decisions at all. I would argue the PF’s eventual silence was a decision in of itself. The rationale behind just about every decision along the way to the scene of the accident was faulty. But in the heat of battle, faulty reasoning can blind us. It could very well be that the PIC had successfully flown into Telluride in similar conditions with a similar ad hoc circling procedure. That would only reinforce his reasoning. I’ve seen this happen on a much larger scale.

In 1980, as a brand-new KC-135A tanker copilot, I was flying in the fifth airplane of a five-ship formation returning from a mid-Atlantic air refueling. We called these missions “fighter drags” because we took off fully loaded with fuel and dragged a flight of fighters halfway across the ocean, giving them every drop of gas that we could spare. Another formation of tankers would meet us halfway and take over, while we turned around and went home. On this flight, there was a long line of thunderstorms between us and our destination, Loring Air Force Base, Maine. Lead decided we needed to come left about 20 degrees to avoid the weather. I noticed our fuel reserve was just about gone. The captain in the left seat wasn’t concerned. “Don’t worry about it,” he said. “We got the top crew flying lead.” With each subsequent turn left I kept quiet until our heading read 180. I drew a globe on our flight plan with us in the upper hemisphere pointing to the south pole. That’s all the captain needed. We broke formation and did our best navigating through the line of weather. We made it home a little late, but we made it. The formation gave up the southerly heading almost an hour after we departed the scene. None of them made it back to base, they diverted to a Canadian alternate. One of the airplanes flamed out three engines before landing.

The squadron rationalized it as an odd situation that nobody could have anticipated, and the entire episode was written off as “one of those things.” We pilots are good at that kind of rationalization, but the story has stuck with me whenever I am oceanic looking at a line of weather.

In the case of the Learjet or my tanker formation, wrong decisions can be made that seem right at the time. “Well, that’s a reason. It isn’t a good reason, but it is a reason.”

How do you inoculate yourself from this kind of reasoning in the heat of battle? I recommend the good old fashion war story. When you find yourself in one of those situations where you got lucky but would never make the same decisions again, don’t write it off as “one of those things.” Talk about it and let others know. At the very least it will keep the incident fresh in your mind; but it may save someone from the same situation with less luck. Another great source of war stories are accident reports. Read these with the attitude the accident pilots were very good and that you could have made the same mistakes. As a safety officer from one of my earliest squadrons would say, “nobody starts a flight and says, ‘today I will crash an airplane.’ Never think you are immune to stupidity because you aren’t.”

4

Being a better pilot

So far, next up

I’ve always considered myself an average pilot, which I admit is a strange thing for someone with over 10,000 hours flying jets and nine type ratings to say. But I honestly think that, based on the evidence that just about everyone I fly with is a better pilot than me. But I think what sets me apart is a philosophy that keeps me from getting rushed in the cockpit, thinking I am smarter than the people who designed my aircraft, or coming up with ad hoc procedures in the heat of battle. We should all strive to become better pilots when it comes to the actual mechanics of flying our aircraft. But we must also focus on our thought processes that guide those methods. Paying attention to your pilot philosophy as well as the methodology, will make you a better pilot.

So far, next up

I began this series with Being a better student, where the focus may have been on pilots, but the lessons apply to us all. Calling this second article “Being a Better Pilot” may seem to be exclusively aimed towards pilots but I think the lessons are more universal than that. I will continue that idea with the next article, Being a better crewmember.